Latest from the Databricks world.

Recent uploads from the Databricks team and a curated set of community creators. Filter by what you actually want to see.

This week

6 videos News

News01 Fundamentals of AI | ML | DL | LLM & GenAI | How LLMs work | What are Models and Agents | NN

The video explains the fundamental concepts of AI, ML, DL, LLMs, and GenAI, illustrating their hierarchical relationship as subsets of each other. It also defines what models are (mathematical formulas trained on data) and how agents combine LLMs with tools and optional memory to perform autonomous tasks.

Tutorials

TutorialsApache Spark Streaming Real-Time Mode - Latency Demo

The video demonstrates how to deploy and run Apache Spark Streaming in Real-Time Mode (RTM) using a declarative automation bundle. It shows that RTM significantly reduces P50 and P95 latencies compared to microbatch mode, achieving 26ms and 50ms respectively in a simplified setup without an external messaging bus.

Tutorials

TutorialsAir Traffic Control with Apache Spark Structured Streaming Real-Time Mode

The video demonstrates building a real-time air traffic control application using Apache Spark Structured Streaming Real-Time Mode, Lakehouse, and Databricks Apps. This system processes live flight telemetry, detects congestion, and generates alerts with sub-second end-to-end latency, all within a single Databricks platform.

Tutorials

TutorialsStep-by-Step: Connecting Databricks to Excel Using the Databricks Excel Add-In

The Databricks Excel add-in provides governed access to Databricks lakehouse data directly within Excel, enabling business users to query data without SQL. The video demonstrates how to self-service install the add-in by editing and uploading its manifest XML file into Excel web.

News

NewsLakebase and PG Vector: Vector Search of the Future?

The video demonstrates how to implement vector search using Lakebase and PG Vector within Databricks, focusing on two patterns: Lakebase native and reverse ETL from the lakehouse. It walks through setting up a maintenance co-pilot application that leverages PG Vector for semantic search, joins, and filtering on maintenance logs, showcasing the process from data embedding to app deployment and job scheduling for continuous updates.

News

NewsLovable now integrates with Databricks

Lovable now integrates with Databricks, allowing users to build data applications and tools using plain English prompts to access and write data to their Databricks Lakehouse. This connector enables rapid development of dashboards and applications while maintaining data governance and controlled access to specific catalogs, schemas, and tables.

Last week

12 videos Releases

ReleasesHow OpenAI and Databricks are working together

Databricks and OpenAI are partnering to help enterprises deploy and adopt AI, with Databricks focusing on secure data access and management for AI applications through products like Genie and AI Gateway. The video highlights GPT 5.5's enhanced planning capabilities and its leading performance in office knowledge work benchmarks, demonstrating its impact beyond coding to automate internal business processes.

News

NewsMaking AI understand your data - part 2 #databricks #data #ai

Databricks metric views allow for advanced data definitions using joins, including nested joins with runtime 17.1+, and complex calculations with windowing for time-based analysis. Materialization can precompute popular metric views with incremental updates, and semantics can be added for non-technical users using runtime 17.2+.

News

NewsHow Techcombank Scales AI Banking to 16M Customers with Databricks

Techcombank uses Databricks to power its AI banking platform, serving 16.2 million customers and processing 8 billion daily transactions with a 12,000-plus feature store. This enables the bank to make data-driven decisions, automate lead allocation with over 8,000 features, and achieve a 3x conversion uplift, improving both productivity and customer experience.

News

NewsThe Restaurant Metaphor: Why BI Teams Get Overloaded

The video uses a restaurant metaphor to explain why Business Intelligence (BI) teams become overloaded. It likens IT to kitchen staff, data to ingredients, analysts to waiters, and the business to customers, highlighting the bottleneck created when too many customer requests overwhelm the limited number of analysts.

News

NewsGit-Style Database Branching (But Actually Fast) #database #lakebase

LakeBase enables Git-style database branching by creating metadata-only branches instead of full data copies. This allows users to create dev, QA, and prod branches that point to the main branch without duplicating the entire dataset.

Community

CommunityFrom Notebook to Production: MLOps Quickstart

The video demonstrates how to apply MLOps best practices on Databricks using a quickstart repository, covering data ingestion, feature preprocessing, model training, deployment, and inference. It showcases Databricks tools like MLflow and Unity Catalog for managing the ML lifecycle, including version control, experiment tracking, model governance, and automated deployment across development and production environments.

Tutorials

TutorialsGoverned Tags & Data Classification in Databricks | ABAC Foundations

Databricks now offers governed tags and automated data classification to identify sensitive information like PII. This enables Attribute-Based Access Control (ABAC) policies for masking or hiding data based on user roles, without altering query patterns.

News

NewsGenAI - For Data Engineers Agenda & Introduction | LLM & Agentic AI | LangChain & LangGraph | Claude

This video introduces a new course, "GenAI for Data Engineers," designed to teach data engineers how to leverage generative AI, LLMs, and agentic AI. The course covers basics of LLMs, building agents with LangChain and LangGraph, using Cloud Code, and applying agentic AI within Databricks and data engineering workflows.

News

NewsReverse ETL: Exposing Gold Layer Data to Lakebase!

Reverse ETL allows exposing gold layer tables from a medallion architecture to Lakebase. This enables applications to read and write to these exposed tables, such as a dim customer table.

Tutorials

TutorialsReal-Time ML Lookups: Lakebase for Zero Latency!

Lakebase enables real-time ML lookups by syncing data from Delta tables, offering a low-latency alternative to querying large gold tables directly. This reverse ETL process allows ML models to access necessary data quickly for real-time predictions.

News

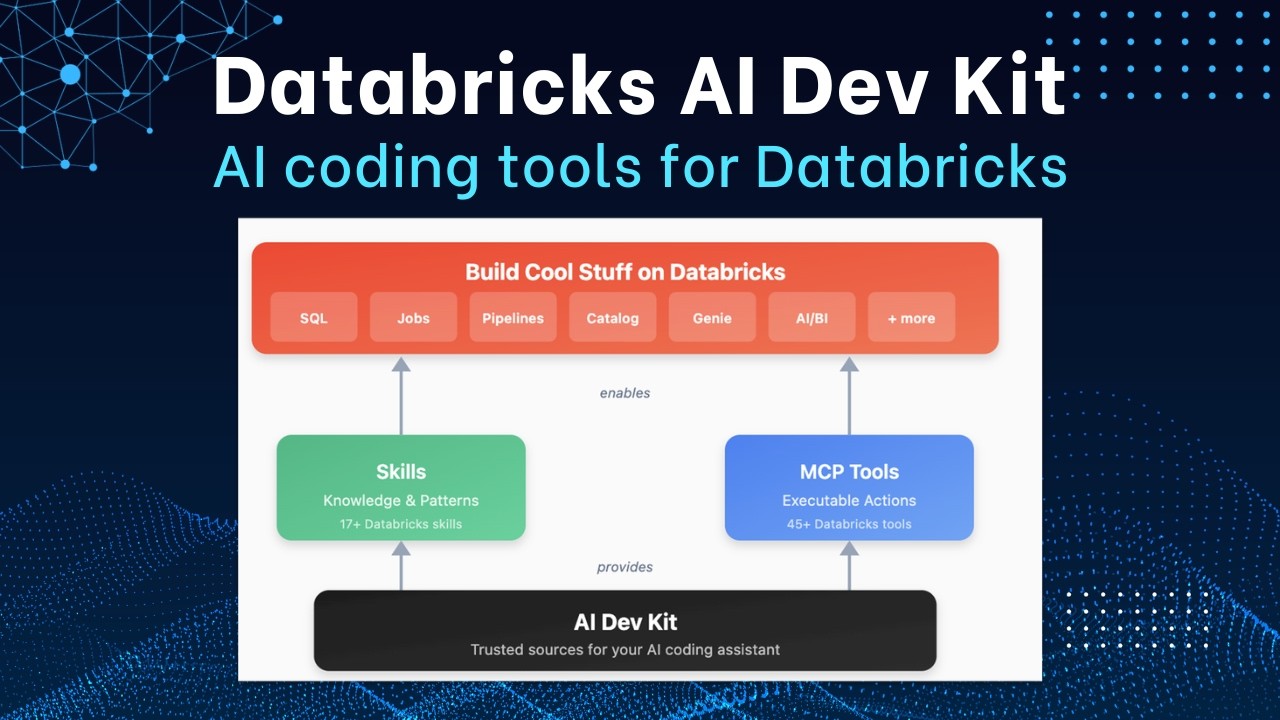

NewsDatabricks AI Dev Toolkit: 10x Your Development

The Databricks AI Dev Toolkit is a repository created by the field engineering team to enable MCP tools and skills for building on Databricks. It can be attached to a coding agent to accelerate development on Databricks tenfold.

News

NewsHow Agentic AI is Rewriting Healthcare | NVIDIA x Databricks

Agentic AI is profoundly changing healthcare by automating administrative tasks for professionals and accelerating scientific research, such as drug discovery. Databricks and NVIDIA are collaborating to build an AI-ready data layer and open-source platforms to unlock insights from digitized medical data, enabling these agentic systems.

Week of Apr 13

7 videos News

NewsZerobus Ingest and Lakebase in Action: Data Streaming with Databricks

The video demonstrates a real-time IoT data streaming application built with Zerobus for ingestion, Lakebase for low-latency serving, and Databricks apps for the front and back end, without relying on Kafka. It showcases how thousands of concurrent IoT events from mobile phone sensors worldwide are ingested, processed, and visualized on a map, with traces served by Lakebase for fast access.

News

NewsMaking AI understand your data - part 1 #ai #data #texttosql #code #vibecoding

Databricks' MetricView helps AI understand data by defining official sources and business logic, preventing inconsistent results from direct queries. The video demonstrates creating a MetricView in Unity Catalog, which can then be used with SQL or AI text-to-SQL tools for consistent data analysis.

Tutorials

TutorialsEnable Storage Firewall in Databricks - Security Tutorial

This video demonstrates how to enable firewall support for an Azure Databricks workspace storage account to restrict public network access. It outlines prerequisites, guides through creating private endpoints, verifying network connectivity configurations, and finally executing a PowerShell command to enable the storage firewall.

News

NewsDatabricks AI Dev Toolkit: Empowering Workspace Users

The Databricks AI Dev Toolkit provides workspace users, even those unfamiliar with IDEs, access to AI tools via a Databricks app serving an MCP server. It supercharges the Genie code agent with MCP tools to automate resource creation.

News

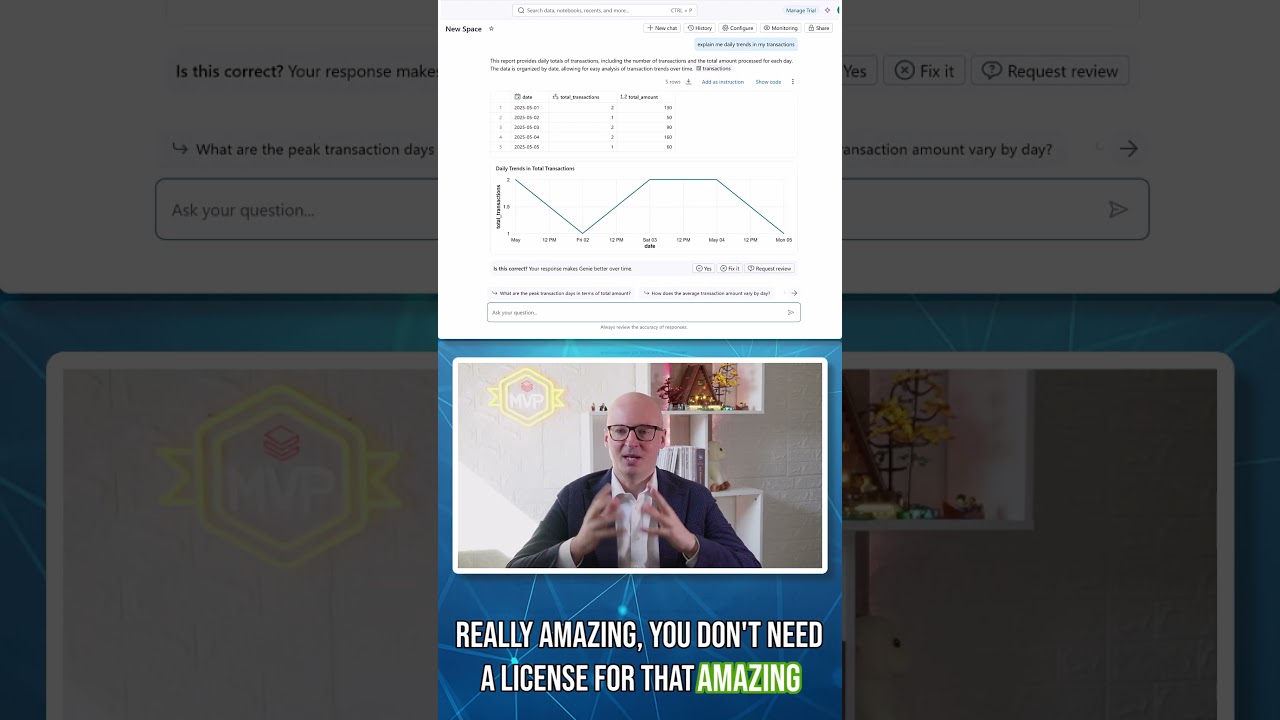

NewsAsk Genie Anywhere | Bring AI/BI Genie to Microsoft Teams & M365 Copilot via Copilot Studio

Databricks' AI/BI Genie, a data analyst agent, now integrates natively with Microsoft Copilot Studio, allowing organizations to embed Genie into Microsoft Teams, M365 Copilot, and SharePoint. This enables users to ask data questions and receive insights directly within their collaboration tools, without leaving their workflow.

News

News10 Data Warehouse Migration Myths Blocking AI-readiness

The video debunks three myths about data warehouse migration to Databricks: the need for a massive new team, migrations being a sunk cost, and projects always blowing past deadlines. It explains that modern lakehouse architecture empowers existing teams, consolidating initiatives removes complexity, and a phased approach delivers value quickly.

News

NewsDatabricks Apps vs Model Serving: Authentication, Cost, and Performance Compared

Databricks Apps are now the recommended first choice for deploying agents due to their flexibility in handling full-stack applications with multiple components, offering faster iteration and local testing compared to Model Serving. Model Serving remains suitable for use cases prioritizing high QPS, governance features like AI Gateway, inference tables, and guardrails, or when scaling to zero is acceptable for cost optimization.

Week of Apr 6

23 videos News

NewsGainwell Transforms Health Data with Databricks on AWS

Gainwell Technologies uses Databricks on AWS to modernize Medicaid and public health programs, enabling rapid data analysis and improved team collaboration. This platform helps drive health outcomes and lower care costs by leveraging AI to quickly process medical records for tasks like prior authorizations, reducing review times from 45 to under 10 minutes.

Events

EventsStrategic App Expansion and the Power of Proprietary Data | Ali Ghodsi at HumanX

Databricks plans to strategically expand its SaaS application offerings, focusing on areas where proprietary data, security, and governance create a strong competitive moat. The company will prioritize applications that leverage its expertise in massive data processing.

Events

EventsHow Databricks Manages Enterprise Data and AI | Ali Ghodsi at HumanX

Databricks centralizes an organization's data from various systems into a Lakehouse, securing it and setting access rules. This consolidated and secured data then feeds into AI agents, models, and analytics for business forecasting and insights.

Events

EventsSolving the AI Reliability Gap | Ali Ghodsi at HumanX

AI agents currently struggle with end-to-end tasks due to a lack of context, not intelligence. Addressing this reliability gap requires capturing context and changing organizational processes, a multi-year effort that Databricks is focused on.

Events

EventsThree Things Required for Deeper Insights from AI | Ali Ghodsi at HumanX

Databricks enables deeper AI insights by combining agents and AI with a robust database and an analytics platform. This approach allows enterprises to leverage their proprietary data for predictive analytics beyond what traditional SaaS applications offer.

Events

EventsAI Productivity and the PC Revolution Analogy | Ali Ghodsi at HumanX

AI offers 20-30% immediate productivity gains, especially in coding, but its full potential is hindered by a lack of context. Achieving greater automation requires re-engineering entire enterprise processes, similar to how early PC users initially treated them as typewriters before fully integrating them.

Events

EventsHow Databricks Genie is Transforming Data Analysis in Minutes | Ali Ghodsi at HumanX

Databricks Genie allows scientists to quickly query complex data, like adverse effects in obesity studies, receiving accurate, referenced answers in minutes instead of months. Businesses like EasyJet use Genie to build agents that combine real-time data on seat availability, competitive pricing, and demand to dynamically set prices, a process that previously took months.

Events

EventsHow Novo Nordisk Uses Databricks Genie for Research | Ali Ghodsi at HumanX

Novo Nordisk utilizes Databricks Genie to enable its scientists to query data warehouses and databases. This allows researchers to ask complex questions about studies, such as adverse effects, and receive accurate, statistically referenced answers.

Events

EventsAI Cut Exploit Time to 1.3 Days | Ali Ghodsi at RSAC 2026

AI has drastically reduced the mean time to exploit a vulnerability from over two years in 2018 to an average of 1.3 days in 2026. This acceleration, particularly since ChatGPT's release, indicates AI's role in rapidly weaponizing CVEs.

Events

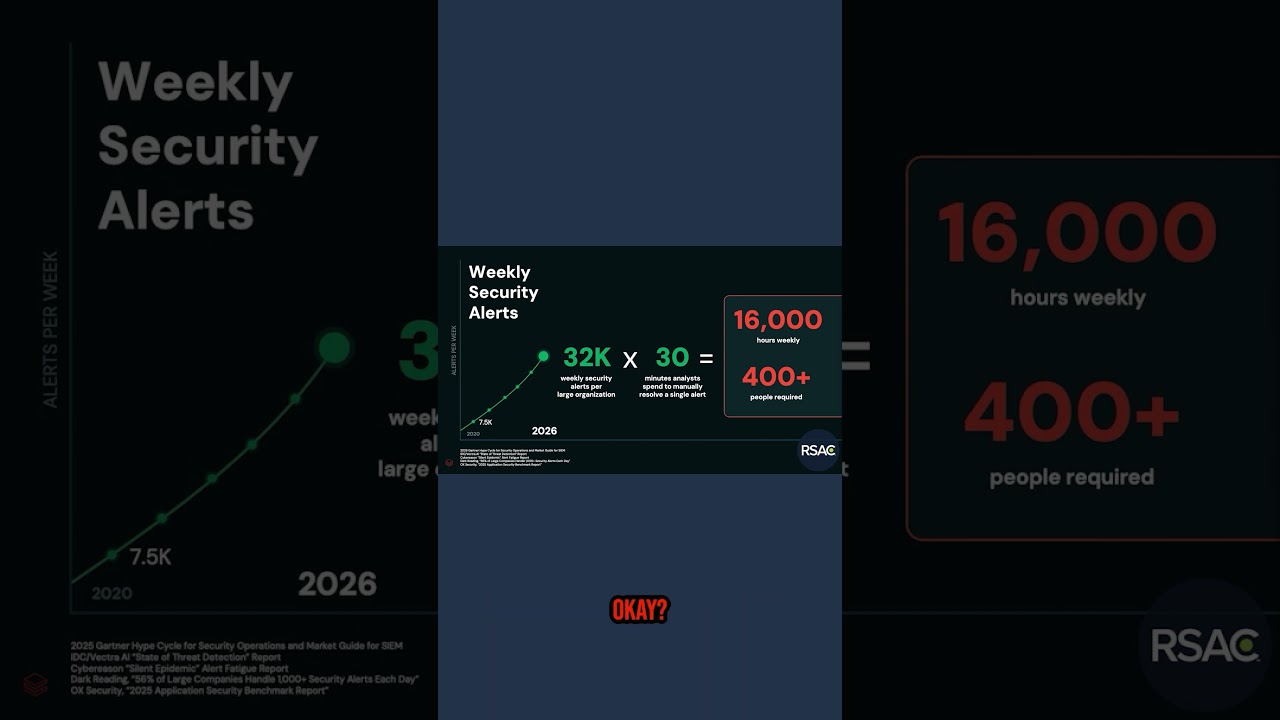

EventsManaging 32,000 Weekly Security Alerts | Ali Ghodsi at RSAC

Weekly security alerts for a reasonably sized organization are projected to increase from 7,500 in 2020 to 32,000 in 2026, requiring over 400 full-time staff to manually process. This demonstrates the unsustainability of current manual security alert management and the urgent need for automated solutions.

Events

EventsThe Case for Open Data Architecture | Ali Ghodsi at RSAC 2026

The video advocates for an open data architecture where organizations store their data in open formats on data lakes, preferably in the cloud, to avoid vendor lock-in and control costs. This approach allows for using various tools to access and manage data, with federation technology enabling access to data in proprietary systems during a gradual migration.

Events

EventsThe Limits of Human-Led Security Operations | Ali Ghodsi at RSAC

Current Security Information and Event Management (SIM) systems are limited by data ingestion pricing models, leading to incomplete data capture and a lack of long-term historical analysis. Furthermore, detection, investigation, and threat hunting processes within these systems are largely manual, resulting in security operations teams being overwhelmed and detecting only a fraction of potential threats.

Events

EventsWhy Legacy SIEM Models Are Struggling | Ali Ghodsi at RSAC 2026

Legacy SIEM models struggle against AI-driven agent swarms because they rely on incomplete data, human SOC teams, and proprietary silos. This approach is unsustainable, leading to the prediction that AI will replace SIEM this year.

News

NewsDatabricks News: AUTO CDC, Workspace skills, Ask Genie, and Type widening

Databricks introduces Auto CDC for efficient change data feed processing, notebook and govern tags for better organization, and workspace skills for Ask Genie to customize its responses. Databricks also adds type widening for streaming tables, allowing data types to automatically adjust to larger incoming values.

Events

EventsThe 1.3 Day Exploit: How AI is Accelerating Cyber Threats | Ali Ghodsi at RSAC 2026

AI has drastically reduced the mean time to exploit vulnerabilities from over two years in 2018 to an average of 1.3 days by 2026. This acceleration, particularly since ChatGPT's release, indicates AI is now automating cyber threat exploitation.

News

NewsWhy Manual Security Operations are Failing in 2026 | Ali Ghodsi at RSAC

Manual security operations are failing because the volume of weekly security alerts is projected to increase from 7,500 in 2020 to 32,000 in 2026, requiring an unsustainable 400+ full-time employees for an average organization. This exponential growth in alerts makes it impossible for human teams to process and respond effectively.

Tutorials

TutorialsLakebase - OLTP Workloads on Databricks!

Lakebase is a fully managed, serverless PostgreSQL offering from Databricks that decouples compute and storage, enabling independent scaling, auto-scaling to zero, and deep integration with the Databricks Lakehouse. It supports reverse ETL to bring data from the Lakehouse into Lakebase for OLTP applications and forward ETL to sync transactional data back to the Lakehouse for analytics.

Tutorials

TutorialsHow to Get AI Dev Tools Running in Databricks Today #tutorial #AI #coding

The video demonstrates how to enable the Databricks AI Dev Toolkit within the Databricks workspace. It addresses the challenge of setting up these AI development tools for users who prefer the Databricks workspace over a local IDE.

Releases

ReleasesDatabricks Genie Code, Carl, Bull**** Bench & more! | AI Newsround - March '26 | Advancing Analytics

The video discusses Databricks' new AI tools, Genie Code for autonomous data work and Carl for faster, cost-efficient enterprise knowledge agents using custom reinforcement learning. It also covers the Bench V2 for evaluating AI models' ability to detect and push back on nonsense, along with updates to various models like Qwen 3.5, Gemini 3.1 Flashlight, and OpenAI's GPT-5.3 Instant, 5.4, Mini, and Nano, highlighting their focus on agent capabilities and cost-efficiency.

Tutorials

TutorialsEasily create metric tracking tables using Spark Declarative Pipelines in Databricks

The video demonstrates how to create metric tracking tables in Databricks using Spark Declarative Pipelines. It shows how to use the create_auto_cdc_from_snapshot_flow function to automatically track changes in a materialized view over time, enabling historical analysis for dashboards.

News

NewsStop Guessing Table Health — Let These Dashboards Tell You

Databricks offers two dashboards for monitoring table health and access: the Table Access Advisor and the Table Health Advisor. These dashboards provide insights into table ownership, read/write patterns, staleness, optimization status, and underlying file structures, helping users identify ghost tables and ensure best practices.

Tutorials

TutorialsFrom Excel to AI Agents: The Evolution of BI Explained

The video explains the evolution of Business Intelligence (BI) through four phases, from IT-centric to analyst-driven, then semantic layers, and finally to a future where AI agents are primary BI users. It demonstrates how Databricks' BI stack, including Dashboards, Genie (natural language interface), Metric Views (semantic layer), and Databricks One (serving layer), addresses these evolving needs by providing a unified, open, and AI-ready platform.

Tutorials

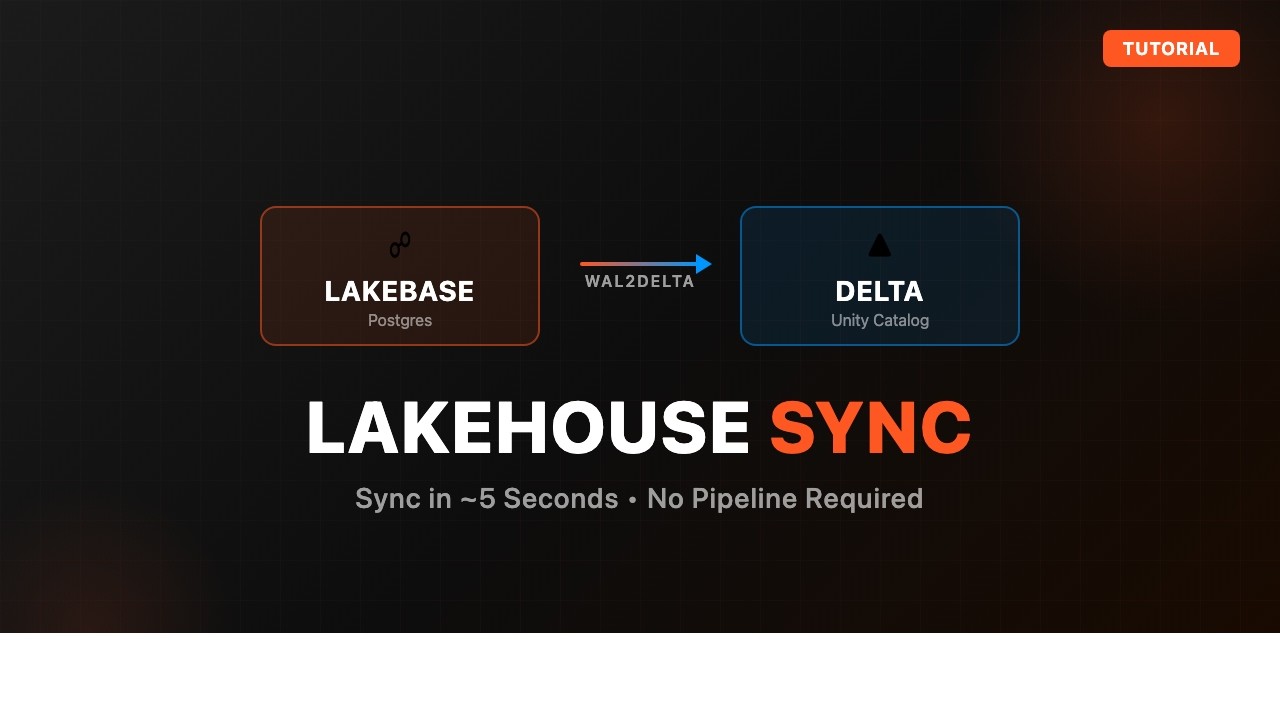

TutorialsHow to Sync Lakebase Tables to Delta with Lakehouse Sync

Databricks demonstrates how to sync Lakebase PostgreSQL tables to Delta tables within a Databricks Lakehouse using the Lakehouse Sync feature. This process enables analytical workloads on data originating from Lakebase applications by leveraging Delta and Spark.

Week of Mar 30

8 videos Tutorials

TutorialsYour Delta Tables Deserve a Postgres Home

Databricks demonstrates syncing Delta tables from Unity Catalog to a Postgres database within Lake Basin, enabling OLTP-style quick lookups for applications. Users can configure continuous, on-demand snapshot, or triggered sync modes, defining primary keys and grouping tables into pipelines for efficient data transfer.

Releases

ReleasesDeploy Azure Databricks in 5 Minutes — VNET Injection + NAT Gateway

The video demonstrates how to deploy an Azure Databricks workspace with VNET injection and NAT Gateway in Azure. It walks through creating the necessary virtual network and subnets, then configuring the Databricks workspace to use them for secure outbound connectivity.

News

NewsNever Build a Dashboard by Hand Again

The Databricks assistant, now called Genie code, can automatically generate multi-page dashboards from a blank canvas using natural language prompts. Users define a metric view as the data source and then describe desired dashboard pages, visuals, and themes, with Genie code planning and executing the build.

News

NewsLakebase: Postgres That Actually Likes Your Lakehouse

Lakebase is a new Databricks offering that provides a fully managed, autoscaling PostgreSQL database designed to bridge the gap between analytical and transactional workloads in a lakehouse architecture. It features bidirectional data streaming between Delta tables and PostgreSQL, database branching for isolated development, and Unity Catalog governance.

News

NewsSee Databricks Assistant Build a Metric View in 90 Seconds

The video demonstrates how Databricks Assistant can build a metric view in 90 seconds by generating YAML code for joins, dimensions, and measures from a natural language prompt. This metric view, a miniature semantic model, centralizes business logic and is queryable via SQL by various tools and agents.

Tutorials

Tutorials54 Zerobus Ingest Lakeflow Standard Connector | Ingest Streaming data directly into Delta Table

The video demonstrates how to use Databricks Zero Bus Ingest, a push-based API, to directly stream various data types like IoT, event, and telemetry data into Unity Catalog Delta tables. It highlights Zero Bus Ingest's ability to simplify streaming ingestion by eliminating the need for intermediate message buses and managing their infrastructure.

News

NewsDatabricks News: Excel add-in, Metrics Views UI, and Quality Monitoring

Databricks announced Lake Watch for cybersecurity, new dynamic dropdown filters in SQL editor, and improved quality monitoring with null value scanning and automated alerts. The video also demonstrates a new UI for defining metric views, an Excel add-in for data preview and import, and the ability to publish dashboards as public web pages.

News

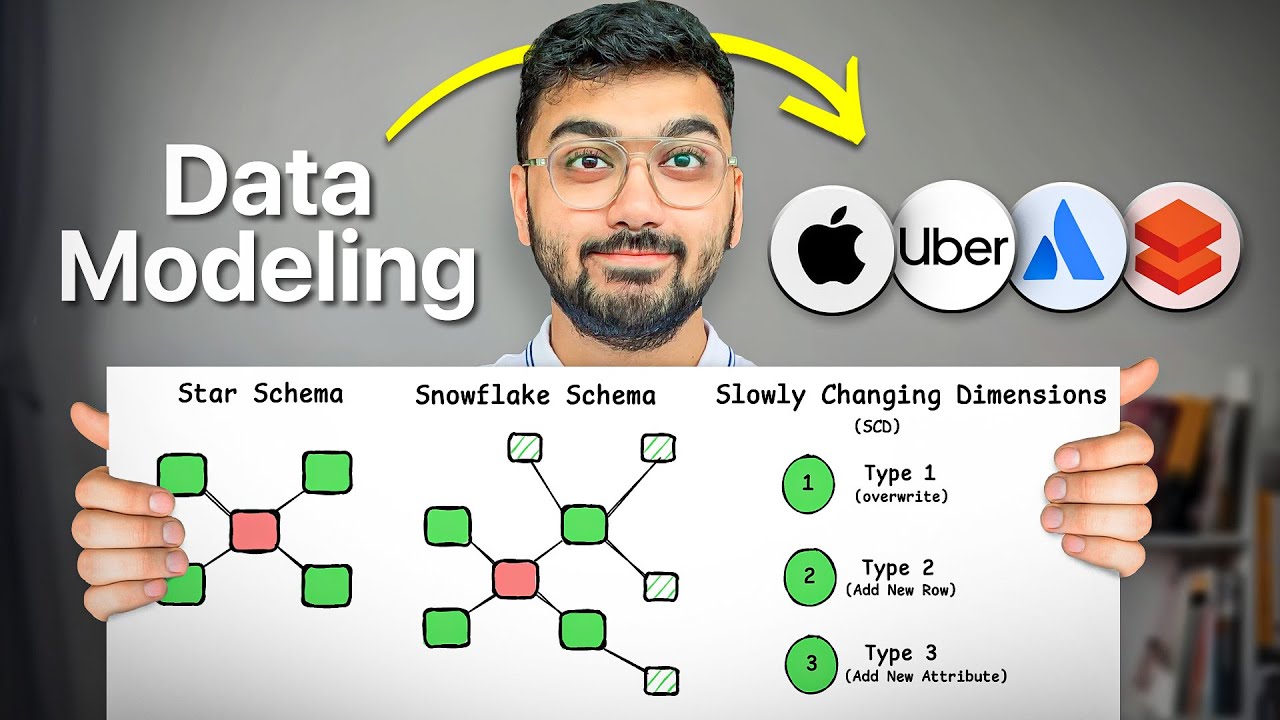

NewsMaster Dimensional Modeling Lesson 03 - Understand the ETL Pipeline

The video explains the typical stages of a data warehouse ETL pipeline, including pre-staging (raw data), staging (cleaned data), operational data store (snapshot), and data mart (star schema). It also details the benefits of having multiple stages, such as easier debugging, data recovery, and auditability, and how this maps to the Medallion Architecture (Bronze, Silver, Gold).

Week of Mar 23

3 videos Tutorials

TutorialsDatabricks AI Dev Kit: Install for Copilot + VS Code

The video demonstrates how to install the Databricks AI Dev Kit for Visual Studio Code with GitHub Copilot on Windows, guiding users through the installation script, profile configuration, and skill selection. It then shows how to enable the Databricks tools in Copilot chat and tests its functionality by generating code and executing SQL queries against a Databricks workspace.

Releases

ReleasesIntroducing Pantheon - Agentic Engineering At Scale

Pantheon is a Databricks application that uses a multi-agent system to generate Lake Flow pipelines for data engineering, allowing users to define data ingestion and transformation rules through a conversational interface. It automates the design, validation, and code generation for lakehouse pipelines, enabling citizen engineers to build robust data solutions without deep PySpark knowledge.

News

NewsDatabricks News: Free Tier, Multi-statement transactions, Declarative Automation Bundles, Genie Code

Databricks now offers a free tier for Lakeflow Connect, providing 100 DBUs per day per workspace, and has introduced multi-statement transactions in Unity Catalog that ensure atomicity with rollback capabilities. The platform also announced a Databricks One mobile app, a new AI runtime with pre-installed tools for GPU use cases, and enhanced Genie Code that understands project structure for automated development tasks. Additionally, Databricks Asset Bundles are now called Declarative Automation Bundles and use a faster direct engine, and a new 5X-Large SQL warehouse is available for processing terabytes of data.

Week of Mar 16

2 videos Tutorials

TutorialsDatabricks AI Dev Kit Demo - Install, DataGen, SDP, Dashboard

The video demonstrates installing the Databricks AI Dev Kit on a Mac, then uses it to generate synthetic data, create serverless Spark declarative pipelines for a medallion architecture, and build a Databricks dashboard based on the generated data. It highlights how the AI Dev Kit leverages skills and an MCP server to automate these development tasks.

Tutorials

Tutorials53 Lakeflow Connect SQL Server Managed Connector | Ingest Data using Databricks native connectors

The video demonstrates how to ingest data from SQL Server into Databricks using Lakeflow Connect's managed connector, covering the setup of a SQL Server database, user permissions, and enabling change tracking/change data capture (CT/CDC). It then walks through configuring the Databricks connection, creating gateway and ingestion pipelines, and showcasing how SCD Type 2 changes are automatically managed.

Week of Mar 9

1 videoWeek of Mar 2

2 videos News

NewsDatabricks News: unit testing, OneLake federation, scoped access tokens

Databricks now allows creating Unity Catalog domains for business users, running JAR tasks on serverless compute, and federating OneLake data directly into Databricks. The platform also introduces in-workspace Python unit testing, new data connectors like HubSpot and TikTok Ads, and scoped personal access tokens for enhanced security.

News

NewsOpenClaw, Databricks Agentic Data Monitoring & more! | AI Newsround - February 2026 | Advancing AI

The video discusses OpenClaw, an open-source framework for AI agents, and Databricks' new agentic data quality monitoring solution. It also introduces Advancing Analytics' Lake Forge and Pantheon, a framework and AI layer for developing scalable Lake Flow pipelines, and highlights new model releases from Anthropic, Google, and OpenAI.

Week of Feb 23

1 videoWeek of Feb 16

4 videos Tutorials

TutorialsDatabricks End-To-End Project | Zero-To-Expert | Streaming, AI, Lakeflow, Unity Catalog, AI/BI

This video demonstrates building an end-to-end restaurant analytics platform on Databricks, covering streaming and batch data ingestion, AI-powered sentiment analysis, and dashboard creation. It teaches how to use Unity Catalog, Lake Flow Connect for CDC, Spark declarative pipelines for real-time data from Event Hub, and how to construct a medallion architecture with fact and dimension tables.

Releases

ReleasesIntroducing Databricks AI Dev Kit - Skills, MCP server, Builder App

The Databricks AI Dev Kit provides agent skills, an MCP server, and a Builder App to enhance AI-driven development on Databricks. It allows users to integrate AI coding tools with Databricks best practices, extending LLM capabilities through specialized functions and offering a chat-based interface for building applications.

News

NewsRethinking Wireframing - Building Power BI Reports with Agents

The video demonstrates an AI-powered agent that generates Power BI reports from natural language prompts and user context, significantly accelerating the initial report design and build process. This tool aims to reduce the traditional lengthy wireframing and iteration cycles by quickly producing functional, multi-page Power BI reports.

News

NewsAI-Driven Development

AI-driven development is a workflow where AI is the primary engine for generating, validating, and maintaining code, shifting the developer's role to directing the AI. Key concepts include the context window (the amount of text an AI model can consider), tokens (processing units for text), and tool use (AI invoking external functions).

Week of Feb 9

1 videoWeek of Feb 2

1 videoWeek of Jan 26

2 videos News

NewsDatabricks Breaking News: 2026 Week 4: 19 January 2026 to 25 January 2026

Databricks introduces temporary tables that are Unity Catalog managed, materialized, and allow DML operations, automatically cleaning up after a session or seven days. Materialized views now support refresh policies like incremental strict, which verifies if a view can be incrementally refreshed before deployment.

Tutorials

TutorialsMaster Databricks 2nd Ed: Lesson 4 - Use Databricks for Free!

Databricks now offers a free edition for learning purposes, providing access to most core features within a serverless environment without requiring a credit card. This free edition has limitations, including small compute resources, no custom cluster allocation, and the absence of R or Scala language support, and is not suitable for sensitive data or production use.

Week of Jan 19

1 videoWeek of Jan 12

3 videos News

NewsDatabricks Breaking News: Week 2026 02: 5 January 2026 to 11 January 2026 #databricks news

Databricks now allows changing catalog and schema during dashboard deployments, addressing a previous issue with environment-specific configurations. The Databricks CLI has a breaking change with plan version 2, altering the structure of deployment plans.

News

NewsVibe-Engineering LakeFlow Pipelines, the Advancing Analytics Way

Advancing Analytics introduces Lake Forge, an engineering framework that uses LLMs and an agentic workflow to generate standardized LakeFlow pipeline templates from data specifications. This system aims to enable scalable, repeatable, and supportable data pipeline creation by balancing AI-driven "vibe coding" with human-engineered guardrails and validation loops.

News

NewsTurbo-Charge your Agents with instant MCP in Databricks

The video demonstrates how to use Model Context Protocol (MCP) in Databricks to give AI agents "superpowers" by enabling them to interact with various tools and data sources. It shows how to easily set up MCP servers within Databricks to connect agents to Unity Catalog functions, vector search, external APIs, and even marketplace MCP services, all without extensive coding.

Week of Jan 5

3 videos Community

CommunityHow Much DSA Do You Need To Crack Data Engineering Interviews?

Data engineers need to understand DSA concepts at an easy to medium level, focusing on practical applications like Big O intuition, arrays, hashmaps, and basic trees/graphs, rather than advanced algorithms. The video provides a practical DSA roadmap, differentiating between "must-knows," "good-to-knows" for stronger product/infra roles, and "overkill" topics for most classic data engineering interviews.

News

NewsClaude Code: 5 Essentials for Data Engineering

The video introduces five essential concepts for using Claude Code in data engineering: the cloud.mmd file for core project information, skills for packaging expertise, commands for predefined prompts, sub-agents for focused tasks, and Model Context Protocol (MCP) for standardized tool interaction. These components help manage context and memory for effective AI-enhanced development.

News

NewsDatabricks Breaking News: Week 2026 01: 29 December 2025 to 4 January 2026 #databricks news

Databricks now supports deploying asset bundles from a generated plan, enabling CI/CD integration for review and approval. Unity Catalog introduces new secret grants, and Runtime 18 brings "everywhere" implementations for literal string colling, parameter markers, and identifiers, along with window functions in metrics view and general availability for SQL scripting.

Week of Dec 29, 2025

1 videoWeek of Dec 22, 2025

2 videos News

NewsDatabricks Breaking News: Week 51: 15 December 2025 to 21 December 2025 #databricks news

Databricks introduces new Lakeflow Connect features, including custom logic for declarative pipelines and new connectors for incremental data import from sources like Confluence, PostgreSQL, and MySQL. The platform also announces the deprecation of legacy features like Hive Metastore and DBFS for new accounts, alongside updates to Lakehouse ACLs, job scheduling from notebooks, flexible node types for cluster deployment, and expanded resource assignment in Databricks apps.

Community

CommunityWill AI REPLACE Data Engineers?

AI will not replace data engineers, but it will shift their role from typing code to designing solutions, guiding AI tools, and verifying outputs. Data engineers should focus on core coding fundamentals, system and product thinking, and effectively using AI and other tools.

Week of Dec 15, 2025

1 videoWeek of Dec 8, 2025

1 videoWeek of Dec 1, 2025

1 videoWeek of Nov 24, 2025

4 videos Tutorials

Tutorials34 Write PySpark Unit Test Cases using PyTest module | Setup PyTest with PySpark

The video demonstrates how to write PySpark unit test cases using the Pytest module. It covers setting up Pytest, creating fixtures for Spark sessions, and writing test functions to validate PySpark transformations and filters.

News

NewsGetting GenAI to Production with Mosaic AI Gateway in Databricks

The video demonstrates how to productionize GenAI applications using Databricks' Mosaic AI Gateway, highlighting features like usage tracking, inference tables, AI guardrails, rate limits, and model fallbacks. It shows how to configure these features through the Databricks UI and monitor application performance and costs using built-in dashboards.

News

NewsWhy YouTube NOT Udemy? #dataengineering #easewithdata #pyspark #databricks

The creator explains they offer free data engineering content on YouTube because they struggled to find good, affordable learning resources when they were starting out. They aim to provide high-quality, demo-rich content for free to prevent others from facing similar difficulties with paid, low-quality courses.

Tutorials

Tutorials33 What is Spark Connect? | Spark Connect vs Spark Session | Setup Spark Connect Server with Cluster

Spark Connect decouples the client and server, allowing remote connection to Spark clusters using DataFrame APIs from various IDEs and languages, unlike Spark Session which tightly couples them and supports low-level RDD APIs. The video demonstrates setting up a Spark 3.5 cluster, starting a Spark Connect server, and running PySpark DataFrame operations remotely from VS Code.

Week of Nov 17, 2025

2 videos Community

CommunityApache Spark Was Hard Until I Learned These 30 Concepts!

The video explains 30 key Apache Spark concepts, starting with a comparison to MapReduce to highlight Spark's in-memory processing and DAG-based execution model. It then details Spark's cluster architecture, job execution flow (driver, executors, tasks), and memory management within executor containers.

Tutorials

Tutorials04_2 - Setup PySpark in Local Machine with Jupyter Lab | PySpark Local Machine Setup

The video demonstrates setting up PySpark with Jupyter Lab on a local machine using Docker, first as a standalone instance and then as a multi-node cluster. It walks through installing Docker Desktop, pulling a PySpark Jupyter Lab image from Docker Hub, configuring ports, and verifying the setup by running a basic PySpark job.

Week of Nov 10, 2025

1 videoWeek of Oct 13, 2025

1 videoWeek of Oct 6, 2025

1 videoWeek of Sep 29, 2025

2 videos Tutorials

TutorialsMaster Databricks 2nd Ed: Lesson 3 - Understanding Clusters

This video explains Databricks clusters, detailing their components like driver and worker nodes, configuration options such as autoscaling and Photon acceleration, and how to create and manage them within Azure. It also covers common interview questions related to cluster sizing and performance tuning, emphasizing that Databricks clusters are essentially Spark clusters enhanced with the Databricks runtime for cloud environments.

Tutorials

TutorialsDatabricks + Cursor IDE: Step-by-Step AI Coding Tutorial

The video demonstrates using Cursor IDE for AI-enhanced Databricks development, focusing on setting up Databricks Connect and leveraging Cursor rules and context for efficient code generation and testing. It shows how to structure projects, write Python and PySpark code, and create unit tests, highlighting the importance of providing clear instructions to the AI agent.

Week of Sep 15, 2025

3 videos Events

EventsDatabricks One - First look at the New Databricks Consumer UI

Databricks One is a new, simplified user interface for Databricks designed for data consumers, offering a less technical and busy experience for viewing dashboards and Genie spaces. It provides a streamlined way to access existing Databricks content, with features like a central search bar and recommended items, and requires dashboards to be published with embedded credentials and run on a schedule to display thumbnails.

Tutorials

TutorialsUnity Catalog Metric Views - Why you should care about Databricks' new Semantic Models

Unity Catalog Metric Views are Databricks' new semantic models, allowing users to define business-friendly names, dimensions, and context-sensitive measures for data. These views centralize KPI definitions, enabling consistent use across dashboards, AI tools, and downstream BI platforms, and are created using YAML.

News

NewsAsk Me Anything: Data Governance in the Age of AI

The video discusses the impact of AI on traditional data governance, emphasizing that governance should act as guardrails for safe innovation rather than a restrictive cage. It highlights the need to adapt governance for AI agents, moving beyond human-centric documentation to formalized tags and classifications that enable AI to make accurate decisions about data usage.

Week of Sep 1, 2025

2 videos News

NewsDatabricks Stored Procedures

Databricks now supports SQL stored procedures, enabling users to encapsulate and execute multiple SQL statements as a single, callable unit. This feature primarily facilitates migrating existing SQL-based ETL logic from legacy systems into Databricks, rather than serving as the recommended approach for new ETL development.

News

NewsDatabricks: What’s new in September 2025? #databricks

Databricks now supports geospatial data types (geography and geometry) with new functions for visualization and spatial operations, and introduces serverless GPU clusters for distributed GPU code execution. The platform also offers enhanced notebook features like side-by-side editing and a notebook-specific search, along with new options for managing serverless environments, SQL warehouses, and access requests in Unity Catalog.

Week of Aug 25, 2025

1 videoWeek of Aug 18, 2025

1 videoWeek of Aug 11, 2025

3 videos Tutorials

Tutorials51 Setup Azure DevOps Pipeline with Databricks Asset Bundles (DABs) | Complete CICD Process

The video demonstrates how to set up an Azure DevOps pipeline to deploy Databricks Asset Bundles (DABs) to higher environments like QA. It covers configuring service principal permissions, setting up Azure pipeline variables for environment-specific details, and writing the YAML pipeline code to validate and deploy Databricks assets.

Tutorials

TutorialsHow to use Recursive CTEs in Databricks

The video demonstrates how to use recursive CTEs in Databricks to traverse hierarchical data structures of unknown depth, such as data lineage or organizational charts. It shows how to write a recursive CTE in SQL, highlighting the `RECURSIVE` keyword and the union of an anchor member and a recursive member.

Tutorials

Tutorials50 Databricks Asset Bundles | Configure Production grade DABs | CICD using DABs (IAC)

The video demonstrates how to configure and deploy Databricks Asset Bundles (DABs) for managing Databricks assets like notebooks, jobs, and pipelines across different environments. It covers creating a structured DAB project, defining resources and targets in YAML, and deploying using both the Databricks UI and CLI, including setting up environment-specific configurations and variables.

Week of Aug 4, 2025

1 videoWeek of Jul 28, 2025

5 videos Tutorials

Tutorials48 Databricks GIT Folders | Configure GIT repository with Databricks using Azure DevOps Repo

News

NewsAI/BI Dashboards - July 2025 Updates

Events

EventsCo-ordinating AI/BI Genie Spaces with Databricks Agent Bricks

Tutorials

Tutorials47 AIBI Genie Space in Databricks | Use Natural Language to Query data

News

NewsThe $500k+ Data Engineering Roadmap: Exact Study Plan & Resources

Week of Jul 21, 2025

2 videosWeek of Jul 7, 2025

2 videosWeek of Jun 30, 2025

1 videoWeek of Jun 23, 2025

3 videosWeek of Jun 16, 2025

1 videoWeek of May 19, 2025

1 videoWeek of Apr 21, 2025

1 videoWeek of Apr 7, 2025

1 videoWeek of Mar 24, 2025

1 videoWeek of Mar 17, 2025

1 videoWeek of Mar 10, 2025

1 videoWeek of Feb 24, 2025

1 videoWeek of Feb 3, 2025

2 videosWeek of Jan 27, 2025

1 videoWeek of Jan 20, 2025

2 videosWeek of Jan 6, 2025

1 videoWeek of Dec 30, 2024

3 videosWeek of Dec 16, 2024

1 videoWeek of Nov 18, 2024

1 videoWeek of Nov 4, 2024

1 videoWeek of Oct 14, 2024

2 videosWeek of Sep 30, 2024

2 videosWeek of Sep 23, 2024

1 videoWeek of Aug 26, 2024

1 videoWeek of Aug 19, 2024

1 videoWeek of Aug 12, 2024

1 videoWeek of Jul 29, 2024

1 videoWeek of Jul 22, 2024

1 videoWeek of Jul 15, 2024

1 videoWeek of Jul 8, 2024

2 videosWeek of Jul 1, 2024

2 videosWeek of Jun 24, 2024

2 videosWeek of Jun 17, 2024

1 videoWeek of Jun 3, 2024

1 videoWeek of May 27, 2024

2 videosWeek of May 6, 2024

1 videoWeek of Apr 8, 2024

1 videoWeek of Apr 1, 2024

1 videoWeek of Mar 18, 2024

4 videos News

NewsApache Spark Memory Management

News

NewsLearn Databricks: Challenge #1

Tutorials

TutorialsHow to read files with Databricks SQL # 5/6 of file handling series

Community

CommunityUnderstanding Databricks & Apache Spark Performance Tuning: Lesson 01 - Spark Architecture

Week of Mar 11, 2024

1 videoWeek of Mar 4, 2024

3 videos News

NewsMaster Dimensional Modeling Lesson 02 - The 4 Step Process

Tutorials

TutorialsHow to use DBUTILS. Part 3/6 of handling FILES with Databricks series.

Tutorials

TutorialsHow to use DBFS. Part 2/6 of Handling FILES with Databricks series.

Week of Feb 26, 2024

3 videosWeek of Feb 19, 2024

2 videosWeek of Feb 5, 2024

1 videoWeek of Jan 22, 2024

5 videos Tutorials

TutorialsDatabricks CERTIFICATION overview and registration in 2024. (including Databricks Academy)

Tutorials

TutorialsDatabricks interview question #3

News

NewsMaster Data Workload Automation: Introduction

Tutorials

TutorialsDatabricks Notebooks. From zero to advance.

Tutorials

TutorialsSolve Databricks interview question #2

Week of Jan 15, 2024

2 videosWeek of Jan 8, 2024

2 videosWeek of Jan 1, 2024

1 videoWeek of Dec 25, 2023

2 videosWeek of Dec 11, 2023

1 videoWeek of Dec 4, 2023

1 videoWeek of Nov 27, 2023

3 videosWeek of Nov 20, 2023

1 videoWeek of Nov 13, 2023

1 videoWeek of Oct 9, 2023

1 videoWeek of Oct 2, 2023

3 videos News

News119. Databricks | Pyspark| Spark SQL: Except Columns in Select Clause

Tutorials

TutorialsDatabricks CI/CD: Intro to Databricks Asset Bundles (DABs)

Community

Community118. Databricks | PySpark| SQL Coding Interview: Employees Earning More than Managers

Week of Sep 18, 2023

3 videosWeek of Sep 11, 2023

1 videoWeek of Sep 4, 2023

3 videos Community

Community115. Databricks | Pyspark| SQL Coding Interview: Number of Calls and Total Duration

Tutorials

TutorialsBroadcast Joins & AQE (Adaptive Query Execution)

Tutorials

Tutorials